Deploying Fast, Thinking Slow

Why cloud decisions get expensive when they outlive their context

If you sit in the middle of cloud and finance long enough, you start to notice that the timelines are different.

Shipping is fast. Bills are slow.

Environments spin up from pipelines. Agents run on managed runtimes. Containers land on platforms with a couple of clicks. Most of those choices don’t feel like “big spend decisions.” They feel like the normal rhythm of shipping: what gets this done today, with acceptable risk.

On the FinOps side, life is busy in a different way.

Scopes keep widening: public cloud, data centers, SaaS, AI workloads. The language has evolved to keep up, as teams try to reason about all of it within one economic frame. Reporting improves. Allocation gets cleaner. The surface area keeps expanding.

Surveys tell a similar story: most large organizations say they have a FinOps practice in place, and the top priorities now are reducing waste, managing commitment-based discounts, forecasting more accurately, and making sure all spend is fully allocated… including AI and sustainability work.

And still, when you talk to people doing the work, the same pattern comes up.

Cloud spend grows. Budgets get stressed. A noticeable slice of spend is still labeled waste or “hard to explain.” Reports go out. Dashboards exist. Yet cost often arrives in the conversation a little later than everyone wishes it did.

At some point you have to ask: if everyone is “doing FinOps,” why does it still feel like this?

How decisions actually get made

If you watch a team work, the tricky stuff usually isn’t a giant architecture bet. It’s the small, reasonable choices that accumulate:

“Let’s keep that test environment around in case we need it.”

“Double the resources for this migration so we don’t get paged all night.”

“Give autoscaling more headroom; we’ll tune it once things calm down.”

Nobody is trying to burn money. The priorities are uptime, customer experience, hitting dates, staying out of incident channels. Cost is in the picture, but it’s rarely the loudest voice.

From a finance view, what shows up are the downstream effects: a larger baseline, more variability, awkward trends that appear disconnected from any single decision. By the time those numbers surface, the behavior that caused them already feels like “this is just how the system works.”

Tools help, but they don’t change that basic timing problem on their own.

The missing dimension: how long choices last

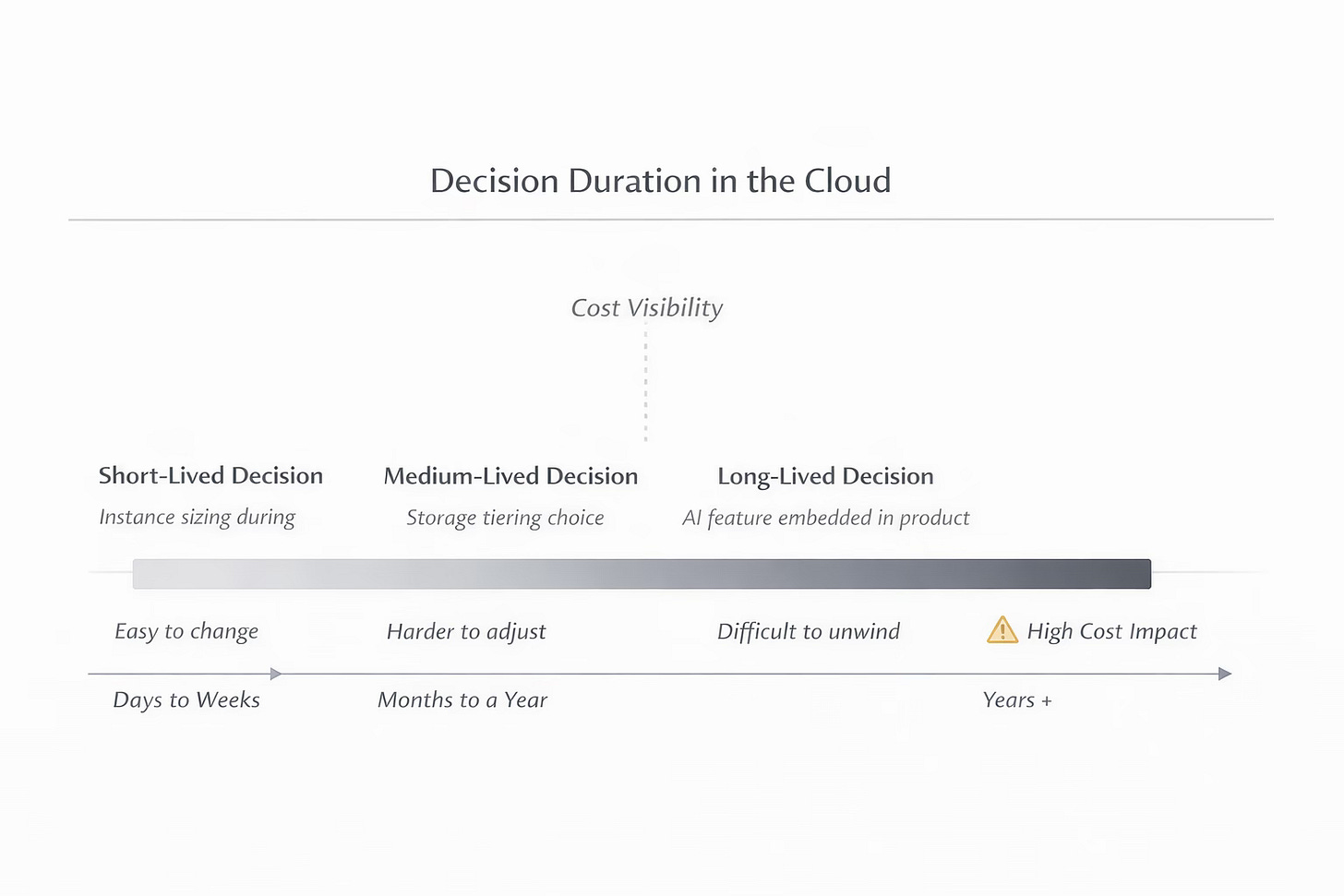

One way to think about this is decision duration.

In finance, duration tells you how sensitive something is over time. There’s a similar idea hiding inside cloud decisions: how long a choice can sit in production before it becomes expensive or painful to change.

Some examples:

A slightly oversized instance that everyone is aware of and plans to tighten later has a short duration.

A storage pattern that looks fine now but turns into a mess during a real recovery has a longer and more dangerous duration.

A “temporary” AI feature that quietly becomes central to a product – with its own GPUs, data flows, and retraining habits – carries a very long duration, because backing out of it means unpicking business expectations, not just infrastructure.

Most teams don’t talk about it explicitly, but you can feel it. A change that touches decision duration lands differently from a change that only moves this month’s bill.

FinOps has matured in measurement. It still has room to mature in how it shapes the lifespan of decisions.

Cost where people actually look

There’s been a growing push, especially from the observability side, to put cost next to performance and reliability in the tools teams already use. LogicMonitor’s recent “cost-intelligent observability” work is one example of that trend: bring billing data into the same operational views engineering and ops teams stare at all day.

Done well, that isn’t “one more dashboard.” It shortens decision duration.

If a deploy goes out and suddenly you need almost twice the infrastructure to serve the same traffic, that shouldn’t be a mystery that turns up in a month end review. It should be visible the same afternoon, in the same place people already look to see whether the system is behaving.

If an environment has gone quiet but still burns through a line item every day, that should show up in the tools teams already use to check health. Not as a guilt trip, just as a nudge: this hasn’t been touched in weeks, do we still need it?

The point isn’t to turn engineers into budget owners. It’s to put cost in the same line of sight as everything else they own, while there is still room to adjust without kicking off a nine month migration.

AI makes the timing problem sharper

AI is where all of this gets more interesting.

FinOps research and conference agendas have been leaning hard in this direction: “FinOps for AI” workstreams, FinOps X keynotes about AI scopes, and new guidance around Cloud Unit Economics that ties marginal cost to marginal value for cloud-delivered software. Cloud providers are open about the same thing from their side: AI workloads are one of the fastest growing parts of spend and they don’t behave like the older patterns FinOps grew up around.

You get:

Bursty inference when usage spikes

Shared GPU pools and complex scheduling

Shortening retraining cycles as teams push for fresher models

Data moving between services in ways that don’t map neatly to old tagging schemes

On day one, an AI feature often feels small and experimental. On day 300, it can look like a permanent part of the product, with real expectations around uptime and quality. The economics bend as usage spreads: memory, storage, traffic, and retraining all start to matter more.

If you only look at cost after those expectations harden, your options narrow quickly. That’s decision duration again, just in a new costume.

This is where small, practical questions pay off:

If this feature does ten times the volume next year, are we still comfortable with where the money lands?

As retraining moves from quarterly to weekly, does the storage and data movement still make sense?

When this AI service drifts from “nice add-on” to “core workflow,” who is actually responsible for its economics?

FinOps for AI isn’t just forecasting. It’s making sure someone is asking those questions before scale and habit lock in.

What “good” might look like

When you look across recent FinOps talks, tools, and survey data, the teams that seem to be making real progress tend to share a few traits:

Cost shows up early. Not as a veto, but as one of the inputs in design, alongside reliability and performance.

Signals live where people already work. Engineers don’t have to go hunting for cost; it’s present in the same views that tell them whether a system is healthy.

There’s language for aging. People can say, “this is fine for six months, but we shouldn’t build the next three projects on it,” and everyone understands what that implies.

AI isn’t a blur. AI workloads are treated as specific economic objects with owners, not a mysterious “ML line” on a bill.

Commitments and waste are deliberate topics, not background noise. Teams know which spend is intentional, which is optional, and which is just inertia.

You don’t need a giant new framework to start moving in that direction. You need a few well chosen places in the workflow where someone can see, with reasonable clarity, when a decision is about to outlive the context that made it sensible.

Where my own work sits in this

I’m spending this year building small tools and experiments around these ideas: how to keep cost close to the work, how to make aging decisions visible, and how to give people better ways to reason about AI-heavy systems before they solidify.

The ambition isn’t to “solve cloud costs” in one shot. It’s much more modest: shorten the distance between “we made a reasonable choice” and “we noticed the moment it started to drift.”

If we can do that consistently, the bills get less surprising, the conversations get calmer, and the systems we build have a better chance of staying inside the range we actually meant to live in.